VAHU: Visionary AI & Human Understanding - Page 3

AI Pair PM: How AI Agents Are Changing Product Requirements from Draft to Final

AI Pair PM uses two specialized AI agents to generate and refine product requirements, cutting PRD creation time by 70% and reducing post-launch bugs. Teams using this method ship faster with sharper specs - and product managers are more strategic than ever.

Cost-Quality Frontiers: How to Pick the Best Large Language Model for Maximum ROI

In 2026, the best large language model isn't the most powerful-it's the one that gives you the highest return on investment. Learn how to match tasks to cost-efficient models like Grok 4 Fast and GPT-5 Mini to slash AI costs by over 85%.

Test Coverage Targets for AI-Generated Code: What's Realistic and Useful

Traditional 80% test coverage isn't enough for AI-generated code. Learn the realistic coverage targets by risk level, why mutation testing matters, and how to avoid costly failures with practical, data-backed strategies.

Risk-Adjusted ROI for Generative AI: How to Account for Controls and Compliance

Risk-adjusted ROI for generative AI factors in compliance costs, legal risks, and model errors to give you real returns - not optimistic guesses. Learn how to calculate it and why it's now mandatory for responsible AI use.

Abstention Policies for Generative AI: When the Model Should Say It Does Not Know

Generative AI often hallucinates answers it can't verify. Abstention policies force models to stay silent when uncertain, reducing harm. Learn how AI learns to say 'I don't know' and why it matters for safety and trust.

Mathematics-Specialized LLMs vs General Models: Accuracy and Cost

Specialized math LLMs like Qwen2.5-Math-7B outperform larger general models like GPT-4 on complex problems while costing far less. RL training is key to balancing accuracy and general capability.

Market Structure of Generative AI: Foundation Models, Platforms, and Apps

Generative AI's market is structured into three layers: foundation models, platforms, and apps. Each plays a distinct role in driving adoption, with vertical apps now outpacing general-purpose tools. Learn how the ecosystem is evolving in 2026.

Data Minimization Strategies for Generative AI: Collect Less, Protect More

Learn how to build powerful generative AI models with less data. Discover practical strategies like synthetic data, differential privacy, and masking to protect privacy without sacrificing performance.

Privacy and Data Governance for Generative AI: Protecting Sensitive Information at Scale

Generative AI is accelerating data leaks, not solving them. Learn how to enforce privacy controls, map AI data flows, and comply with global regulations-before regulators come knocking.

Structured Output Generation in Generative AI: Stop Hallucinations with Schemas

Structured output generation uses schemas to force AI models to return consistent, machine-readable data-eliminating parsing errors and reducing hallucinations in production systems. This is now a standard feature across major AI platforms.

Unit Economics of Large Language Model Features: How Task Type Drives Pricing

LLM pricing isn't one-size-fits-all. Task type-whether it's simple classification or complex reasoning-determines cost. Learn how input, output, and thinking tokens drive pricing, and how smart routing cuts expenses by up to 70%.

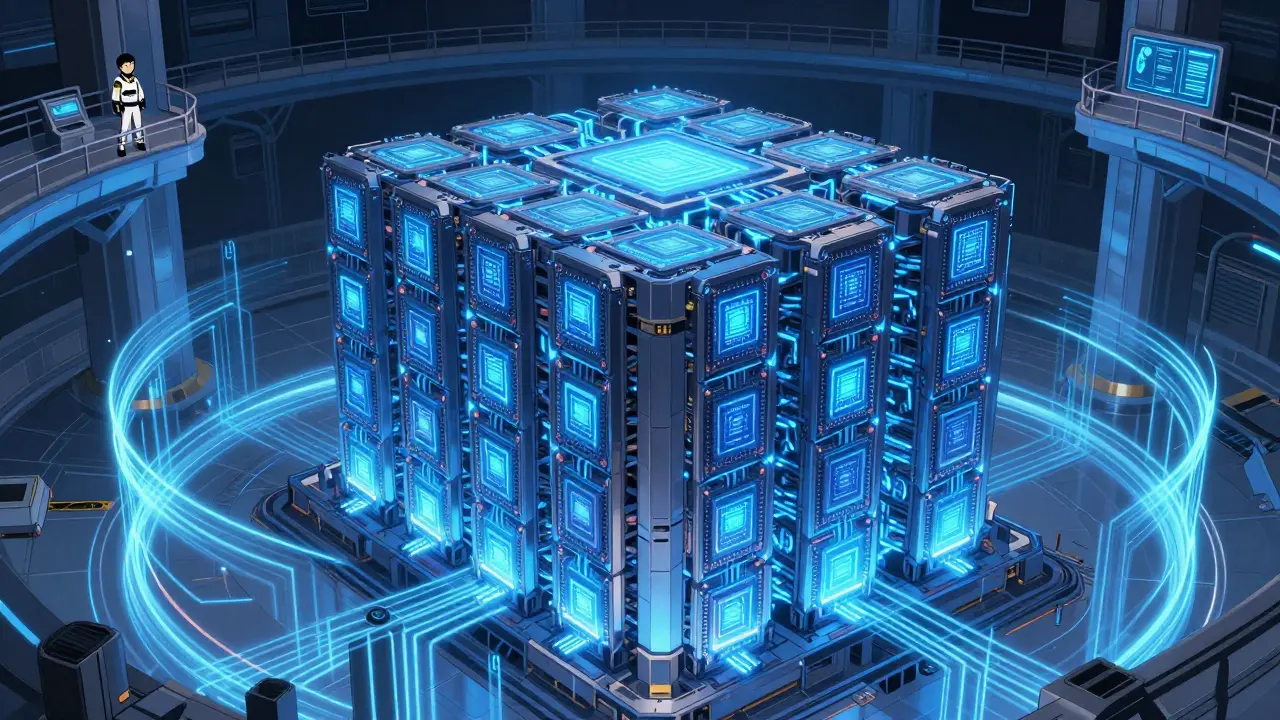

Compute Infrastructure for Generative AI: GPUs vs TPUs and Distributed Training Explained

GPUs and TPUs power generative AI, but they work differently. Learn how each handles training, cost, and scaling - and why most organizations use both.