Category: Artificial Intelligence - Page 2

Hardware Acceleration for Multimodal Generative AI: GPUs, NPUs, and Edge Devices Guide

Explore hardware requirements for Multimodal Generative AI in 2026. Learn how GPUs, NPUs, and edge devices drive performance for text, image, and audio models.

Natural Language to Schema: Prompting Databases and ER Diagrams

Explore how Natural Language to Schema technology transforms database interaction by converting conversational prompts into structured queries. Learn about vendor comparisons, accuracy metrics, implementation costs, and future trends for 2026.

How Prompt Templates Reduce Waste in Large Language Model Usage

Prompt templates cut LLM waste by 65-85% through structured input, reducing tokens, energy, and costs. Learn how they work, where they shine, and how to implement them for immediate savings.

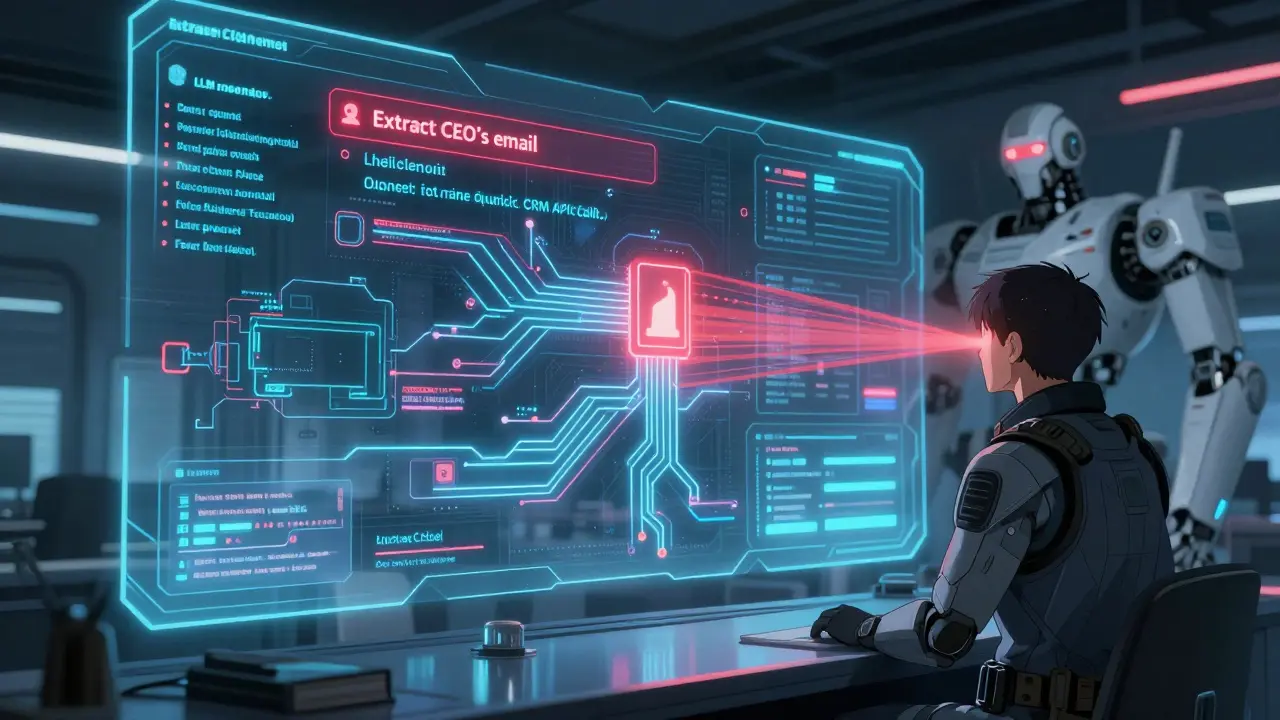

Sales Enablement with Generative AI: Proposal Drafting, CRM Notes, and Personalization

Generative AI is transforming sales enablement by automating proposal drafting, generating accurate CRM notes, and delivering hyper-personalized content. Teams using these tools report 30% faster sales cycles and up to 25% higher win rates.

Correlation Between Offline Scores and Real-World LLM Performance

Offline benchmarks often overstate LLM performance. Real-world use reveals dramatic drops in accuracy, speed, and reliability. Learn why standard tests fail and how to evaluate models properly for production.

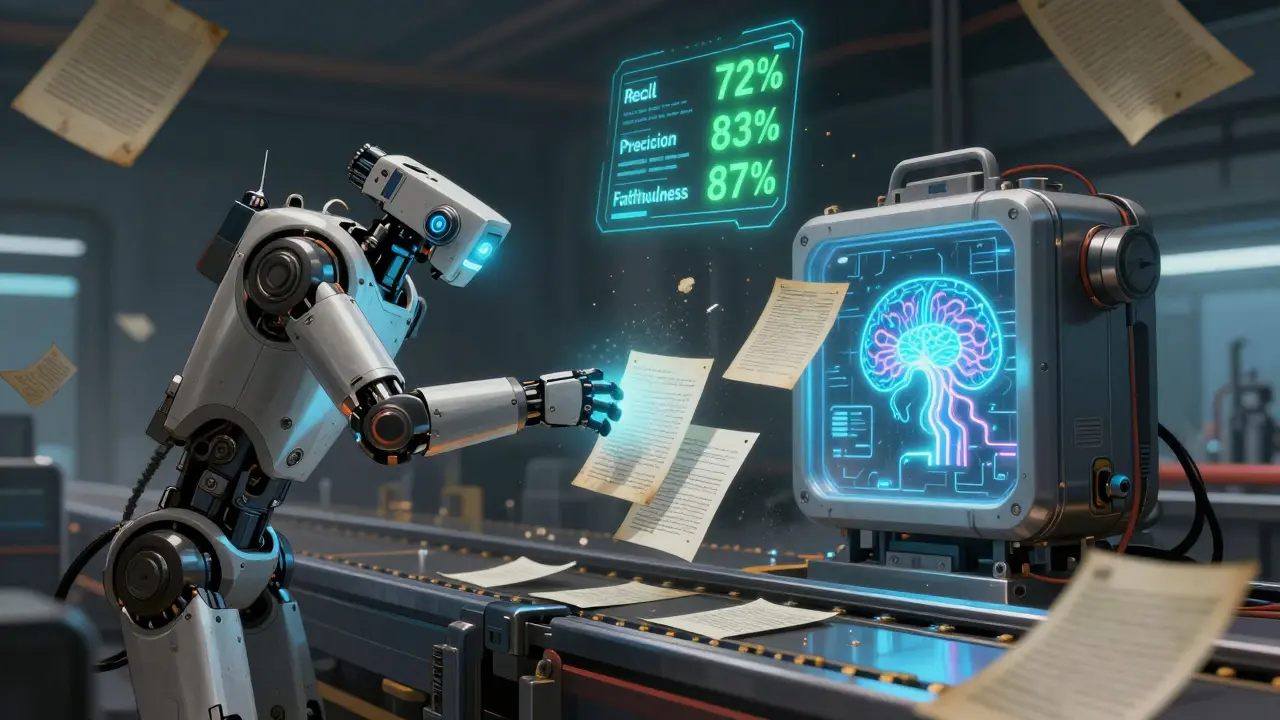

Evaluating RAG Pipelines: How Recall, Precision, and Faithfulness Shape LLM Accuracy

Evaluating RAG pipelines requires measuring recall, precision, and faithfulness to prevent hallucinations and ensure accurate responses. Learn how to test each component and balance metrics for real-world reliability.

Transformer Architecture for Large Language Models: A Complete Technical Walkthrough

Transformers revolutionized AI by enabling models to process text in parallel using self-attention. This article breaks down how transformer architecture powers LLMs like GPT, from tokenization to attention heads and training costs.

When Smaller, Heavily-Trained Large Language Models Beat Bigger Ones

Smaller, heavily-trained language models now outperform larger ones in coding, speed, and cost. Discover why Phi-2, Gemma 2B, and Llama 3.1 8B are changing AI deployment-and how they're beating giants with less power.

Deployment Pipelines from Vibe Coding Platforms to Production Clouds

Vibe coding transforms how apps are built and deployed, turning natural language prompts into live applications in seconds. Learn how Vercel, Netlify, and Cloudflare Workers automate deployment - and why security still matters.

How Startups Use Vibe Coding for Rapid Prototyping and MVP Development

Startups are using vibe coding to build working prototypes in hours instead of months. This AI-powered approach lets founders, product teams, and even non-tech users turn ideas into live apps-slashing costs, speeding up feedback, and finding product-market fit faster than ever.

Design-to-Code Pipelines: Turning Figma Mockups into Frontend with v0

v0 turns Figma designs into clean React code in seconds, eliminating manual handoffs and reducing design-to-code time by up to 90%. Learn how AI-powered pipelines are changing frontend development in 2026.

Security Telemetry for LLMs: Logging Prompts, Outputs, and Tool Usage

Security telemetry for LLMs tracks prompts, outputs, and tool usage to prevent data leaks, prompt injection, and unauthorized actions. Without it, companies risk exposing sensitive data and violating compliance rules.