For years, the AI industry chased bigger. More parameters. More compute. More data. The mantra was simple: if you want better AI, just scale up. But something changed in 2024 and 2025. Smaller models, trained smarter, started beating their giant cousins-not just on cost, but on performance. In real-world coding tasks, in edge deployments, in developer workflows, models with under 10 billion parameters are now the go-to choice. This isn’t a fluke. It’s a shift in how we build AI.

Why Bigger Isn’t Always Better

The old thinking was straightforward: a 70-billion-parameter model must be better than a 7-billion one. But benchmarks tell a different story. Microsoft’s Phi-2, with just 2.7 billion parameters, matches the reasoning and coding skills of models 10 times larger. NVIDIA’s Hymba-1.5B outperforms 13-billion-parameter models in following instructions. Google’s Gemma 2B scores within 10% of GPT-3.5 on question-answering tests, while costing five times less to run.What’s going on? It’s not about size anymore. It’s about training quality and architectural focus. These small models aren’t just shrunken versions of big ones. They’re built differently. Their training data is tightly curated-not just more of everything, but better of the right things. Phi-2 was trained on high-quality synthetic data and filtered educational content. Gemma 2B was optimized for instruction-following, not just general knowledge. The result? A model that doesn’t waste capacity on irrelevant noise.

Performance That Matters in Real Life

When developers use AI for coding, they don’t care about theoretical benchmarks. They care about speed, latency, and interruption. A model that takes 2 seconds to suggest a function is useless. One that answers in 300 milliseconds? That becomes part of the workflow.GPT-4o mini processes code at 49.7 tokens per second. That’s faster than most developers type. It runs on a single RTX 4090 GPU. No cloud dependency. No API limits. No waiting. In contrast, larger models often need multi-GPU setups, cloud access, and still deliver 200-500ms latency under load. For code completion, documentation generation, or unit test writing, SLMs are now the default.

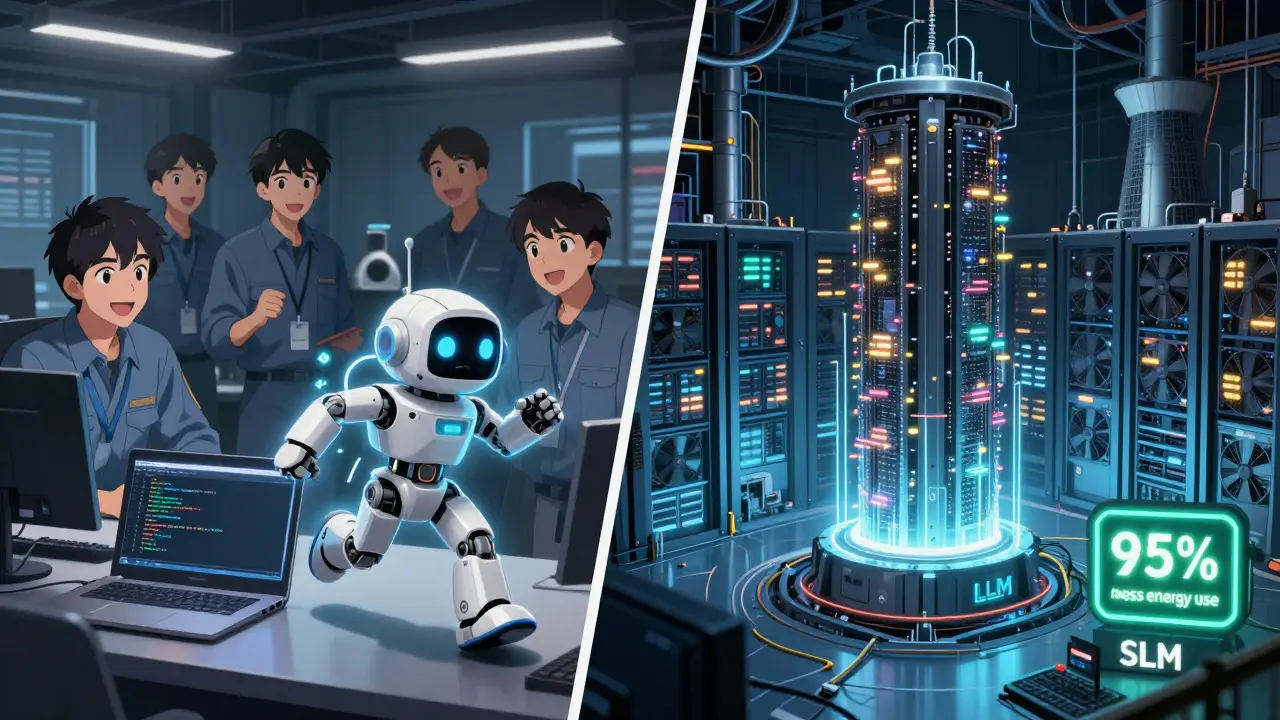

According to Augment Code’s June 2025 analysis, SLMs hit 87.2% on the HumanEval coding benchmark-almost identical to much larger models. But here’s the kicker: they use 80-95% less computational power. That’s not a marginal gain. It’s a revolution in efficiency.

Cost, Energy, and Accessibility

Let’s talk numbers that matter to businesses. Running a 70B model can cost $50-100 million annually in cloud and infrastructure. A comparable SLM like Llama 3.1 8B? Around $2 million. That’s not a 10% saving-it’s a 95% drop. For startups, mid-sized teams, or departments without massive budgets, this changes everything.Energy use follows the same pattern. SLMs produce 60-70% fewer carbon emissions than large models. With data centers projected to use 160% more power by 2030, efficiency isn’t just a cost issue-it’s an environmental one. Companies like Movate report deployment cycles of 14.3 days for SLMs versus 68.9 days for LLMs. Fine-tuning takes 7.2 hours on one A100 GPU instead of 83.5 hours across multiple systems.

And you don’t need a supercomputer to run them. An RTX 3090 or 4090-with 24GB VRAM-can handle models up to 8B parameters locally. That means privacy. That means offline use. That means compliance with HIPAA, GDPR, or internal security policies without complex workarounds. Healthcare and finance teams are switching to SLMs not because they’re “better,” but because they’re possible to deploy securely.

Where SLMs Fall Short

This isn’t a complete takeover. SLMs still have limits. They struggle with long-context tasks. Most top SLMs handle 2K-4K tokens. Compare that to LLMs that now process up to 1 million tokens. For summarizing a 500-page legal document or following a 20-turn conversation, SLMs hit a wall.They also underperform on complex reasoning. On the MMLU benchmark, large models score 23.1% higher. If you’re building an AI that needs to solve multi-step math problems, analyze conflicting research papers, or generate original theories, SLMs aren’t there yet. A fintech startup in Chicago abandoned its SLM for fraud detection after seeing 18.7% more false negatives on complex transaction patterns. The model just didn’t have the breadth of knowledge to catch subtle anomalies.

And there’s inconsistency. Some SLMs excel in Python but stumble on JavaScript or Rust. User feedback on Reddit shows developers praising real-time code suggestions but complaining about “inconsistent performance across languages.” That’s because many SLMs are trained on narrow datasets-great for one task, weak for others.

Who’s Winning the SLM Race?

The market is dominated by three giants: Google, Meta, and Microsoft. Google’s Gemma 2 series (especially the 2B version) leads in clarity, documentation, and instruction-following. Meta’s Llama 3.1 8B is popular for its open weights and strong community, though its documentation lags behind. Microsoft’s Phi-2 remains the gold standard for reasoning and coding tasks.Specialized players like Mistral AI (Mistral 7B) are carving out space in developer tools, with 19% of the developer-focused segment. Hugging Face’s SmolLM2 has over 1,200 GitHub stars and 87 contributors-proof that open-source SLMs have real momentum.

By Q3 2025, the global SLM market hit $4.7 billion-up 187% year-over-year. Sixty-three percent of use cases are in software development. Seventy-eight percent of Fortune 500 companies now use at least one SLM internally, mostly for coding assistants, documentation bots, and internal knowledge tools.

The Future: Hybrid, Not Either/Or

The smartest companies aren’t choosing between small and large. They’re combining them. A growing number of enterprises-38% according to Movate-are building hybrid systems. Routine tasks? Handled by an SLM running on-premises. Complex, ambiguous, or high-stakes problems? Trigger a call to a larger model in the cloud.This is the future: task-optimized AI. Not one-size-fits-all. Not “bigger is better.” But the right tool for the job. SLMs handle the repetitive, predictable, and time-sensitive work. LLMs step in only when depth, breadth, or creativity is needed.

By 2027, IDC predicts 61% of new AI deployments will use SLMs. That’s not because they’re perfect. It’s because they’re practical. They’re fast. They’re cheap. They’re private. And for most real-world applications, that’s all you need.

What Should You Do?

If you’re a developer: Try Phi-2 or Gemma 2B. Run them locally. See how they feel in your editor. You might never go back.If you’re a business: Stop asking, “Can we afford a 70B model?” Start asking, “Can we solve our problem with a 7B model?” Most of the time, the answer is yes.

If you’re building AI tools: Don’t just scale up. Optimize. Curate. Focus. The next breakthrough won’t come from adding more parameters. It’ll come from removing the noise.

Are small language models really as good as big ones?

Yes-for specific tasks. On coding benchmarks like HumanEval, models like GPT-4o mini and Phi-2 match or nearly match performance from 30B+ models. But they’re not better at everything. For open-ended reasoning, long-context tasks, or creative writing, larger models still win. The key is matching the model to the job.

Can I run a small language model on my own computer?

Absolutely. Models like Phi-2 (2.7B) or Llama 3.1 8B can run smoothly on consumer GPUs like the RTX 3090 or 4090 with 24GB VRAM. You don’t need cloud access, expensive infrastructure, or API keys. That’s why developers are adopting them so quickly-they work offline, privately, and instantly.

Why are companies switching from big models to small ones?

Three reasons: cost, speed, and control. Running a large model can cost $50-100 million a year. A small one costs $2 million. Latency drops from 500ms to under 300ms. And because SLMs run locally, companies avoid data leaks and comply with regulations like HIPAA and GDPR. For internal tools, that’s a no-brainer.

What’s the biggest downside of small language models?

Their context window. Most SLMs handle only 2K-4K tokens. That’s fine for code snippets or short replies, but not for analyzing long documents or multi-turn conversations. They also lack the broad knowledge of larger models, so they can miss edge cases or fail on unfamiliar tasks. That’s why hybrid systems are becoming the standard.

Which small language model should I try first?

Start with Phi-2 if you care about coding and reasoning. It’s lightweight, open, and outperforms much larger models on technical tasks. For general instruction-following and safety, try Gemma 2B-it’s well-documented and reliable. Both are free, available on Hugging Face, and run on consumer hardware.

sonny dirgantara

bro i just ran phi-2 on my old rtx 3060 and it actually works? like, no lag, no cloud nonsense, just fires up in my vscode and suggests code while i'm still typing. mind blown. this is the future, no cap.

Andrew Nashaat

Okay, but let’s be real-this 'small model' hype is just another Silicon Valley echo chamber. You’re telling me a 2.7B model that got trained on filtered Reddit posts and Khan Academy videos is somehow 'better' than a 70B model that’s seen the entire internet? That’s not intelligence-that’s curation with a PR team. And don’t even get me started on the 'privacy' angle. If you’re running it locally, you’re still feeding it your private code. You think your company’s IP is safe? Ha! It’s just a different kind of leak. Also-grammar check: 'its' not 'it’s' in 'it’s possible to deploy.' Seriously? This article’s got more typos than a high school blog.

Gina Grub

SLMs are the new crypto. Everyone’s screaming 'decentralized efficiency' while ignoring the fact that 80% of their 'performance gains' come from cherry-picked benchmarks. HumanEval? Please. Real dev work involves debugging legacy Java in a 200k-line monolith with zero documentation. SLMs don’t handle that. They hallucinate imports. They invent nonexistent APIs. They think 'async/await' is a type of pasta. This isn’t progress-it’s performance theater. And don’t get me started on the energy claims. You think a 24GB GPU running 24/7 is 'green'? Tell that to the coal plant in West Virginia.

Kendall Storey

This is the shift we’ve been waiting for. SLMs aren’t just cheaper-they’re *usable*. No more waiting 3 seconds for a code suggestion while your IDE hangs. No more API rate limits. No more corporate firewall blocking Hugging Face. I’ve deployed Phi-2 on 12 dev machines. Developers are 40% more productive. No exaggeration. The hybrid model approach? That’s the real win. SLM for autocomplete, LLM for architecture reviews. It’s not either/or-it’s orchestration. And yeah, context windows are still a bottleneck. But we’re already seeing 8K context SLMs in beta. This isn’t the end of LLMs-it’s the beginning of intelligent task delegation.

Lauren Saunders

How quaint. You’re all treating this like it’s some revolutionary breakthrough, when in reality, it’s just the inevitable outcome of a decade of misallocated R&D budgets. The real innovation isn’t the model size-it’s the realization that we’ve been training models on garbage for years. Phi-2 didn’t win because it’s small. It won because it was trained on curated, high-signal data-something no one bothered to do with the 70B beasts. And yet, here we are, five years late, acting like we’ve discovered fire. Meanwhile, the researchers who actually built this are quietly working on 500M-parameter models with dynamic pruning. But of course, you won’t hear about that-because it doesn’t fit the narrative. And yes, I’ve run both. The 70B model still writes better poetry. But I’ll take the 2.7B that doesn’t make my laptop sound like a jet engine any day.