When you ask a large language model a question, it doesn’t just pull answers from memory. It looks up facts, checks sources, and pieces together responses using something called a RAG pipeline. But not all RAG systems work the same. Some give you accurate, well-supported answers. Others make things up - even when they’re confident. The difference comes down to three things: recall, precision, and faithfulness. If you’re building or using RAG systems, you need to know how these metrics actually behave - not just what they mean.

What RAG Pipelines Actually Do

A RAG pipeline isn’t one tool. It’s two systems working together. First, the retriever scans a knowledge base - maybe internal documents, Wikipedia, or medical journals - to find the most relevant pieces of information. Then, the generator (usually a large language model like GPT-4 or Llama 3) uses those snippets to build a final answer. Sounds simple? It’s not. The retriever might pull the wrong docs. The generator might ignore them. Or it might twist them into something false.

That’s why evaluation matters. You can’t just say, “It gave a good answer.” You need to measure how well each part did its job. And that means looking at three specific metrics.

Recall: Did It Find the Right Info?

Recall measures whether the retriever found all the relevant documents. Imagine you’re searching for articles about diabetes treatment. If the system pulls back 10 documents, but 7 of them are actually useful, your recall is 70%. If it only found 2, your recall is 20% - and you’re missing critical info.

High recall means fewer blind spots. But it doesn’t mean quality. A system could return 20 documents, 15 of which are irrelevant, and still have high recall. That’s why recall alone isn’t enough. In healthcare or legal RAG systems, missing even one key document can be dangerous. A 2024 study from Stanford’s AI Safety Lab found that systems with recall below 65% failed to answer 4 out of 10 clinical questions correctly - even when the generator was state-of-the-art.

Improving recall often means tweaking how documents are chunked. A 400-character chunk might miss context. A 1200-character chunk might include noise. Testing different sizes - and using semantic chunking (breaking text by topic, not word count) - can boost recall by 15-25% in real-world tests.

Precision: Was the Info Actually Useful?

Precision flips the script. It asks: Of all the documents retrieved, how many were actually helpful? If the system pulls 10 documents and only 2 are relevant, precision is 20%. Low precision means the generator is working with garbage.

High precision reduces hallucinations. If the generator only gets clean, relevant context, it’s less likely to invent facts. But precision alone can be misleading. A system could return one perfect document and ignore nine others that were also useful - giving you high precision but low recall. That’s a trap.

Real-world systems need balance. A 2025 benchmark from the Allen Institute tested 12 RAG pipelines on 500 medical questions. The top performers had recall between 72% and 81% and precision between 75% and 83%. Systems with precision below 60% generated incorrect answers 47% of the time - even if recall was high.

How do you improve precision? Reranking. After the retriever pulls initial results, a second model scores them again using relevance signals. Fine-tuning the retriever on domain-specific data helps too. For example, if you’re building a legal RAG, training it on case law instead of general text makes it better at spotting relevant rulings.

Faithfulness: Did the Answer Stick to the Facts?

This is where most RAG systems fail. Faithfulness measures whether the final answer is grounded in the retrieved documents. It doesn’t care if the documents are correct - it cares if the model stuck to them.

Here’s a classic example: You retrieve a document saying, “Metformin is used for Type 2 diabetes.” The model answers, “Metformin treats Type 1 diabetes and is also effective for weight loss.” That’s a faithfulness failure - it changed the facts, even though the source was clear.

Faithfulness is measured in several ways. One method checks context overlap: how much of the answer can be traced back to the retrieved text. Another uses LLMs as judges: prompt a model to say whether the answer is supported, contradicted, or unrelated to the context. A 2024 paper from Meta showed that even top models like Llama 3 had faithfulness scores as low as 68% on complex queries - meaning they invented unsupported claims over 30% of the time.

Low faithfulness often comes from two places: poor context quality (too much noise) or weak prompting. Using prompt scaffolding - structuring the input with clear instructions like “Answer only using the provided context” - can lift faithfulness by 20% or more. Also, tracking token confidence helps. If the model generates a response with low probability for key words, it’s probably guessing.

The Three-Stage Evaluation Framework

The best way to evaluate RAG isn’t to look at answers. It’s to look at each stage.

- Retrieval Quality: Measure recall@k, precision@k, and latency. Use MMR (Maximum Marginal Relevance) to avoid redundancy. Test with real user queries, not synthetic ones.

- Generation Faithfulness: Use context overlap, FactScore, and attribution scoring. Ask: Can every claim in the answer be traced to a source? If not, flag it.

- End-to-End Behavior: Use human ratings (1-5 scale) and live feedback. Did the user get what they needed? Did they trust the answer? Embedding metrics in production - like click-through rates or follow-up questions - reveal what really works.

One company in Boulder, building a customer support RAG for SaaS products, found that their system scored 85% on retrieval and 80% on faithfulness - but only 52% on user satisfaction. Why? The answers were accurate but too technical. They added a simplification layer and saw satisfaction jump to 78%. Metrics don’t tell the whole story.

When Correctness Beats Groundedness

Most people assume that if an answer is grounded in the context, it’s good. But that’s not always true. What if the context is wrong?

Imagine a RAG system trained on outdated medical guidelines. It retrieves a document saying, “Aspirin prevents heart attacks in all patients.” The model answers correctly based on that - but the answer is factually wrong. In this case, groundedness is high, but correctness is low.

Here’s the hard truth: Sometimes, you want the system to stick to bad sources. In legal or compliance use cases, you need to reflect the source material - even if it’s outdated. But in healthcare or finance, you need correctness. That’s why evaluation must be tailored. Define your priorities: Is it better to be accurate, or to be consistent with your data?

How to Test Your RAG System

Here’s a practical checklist for testing:

- Use a labeled dataset of 100-200 real questions with known correct answers.

- Test different chunk sizes: 400, 600, 1200 characters. Measure recall and faithfulness changes.

- Compare retrievers: Dense vector (e.g., sentence-transformers) vs. keyword-based (BM25). Dense usually wins, but BM25 is cheaper.

- Test reranking. Add a second-stage model to score retrieved docs. Often boosts precision by 10-15%.

- Run faithfulness checks using LLM-as-judge prompts. Example: “Based on the context, is the following answer supported, contradicted, or unrelated?”

- Measure response time. If retrieval takes more than 1.2 seconds, users will abandon the system.

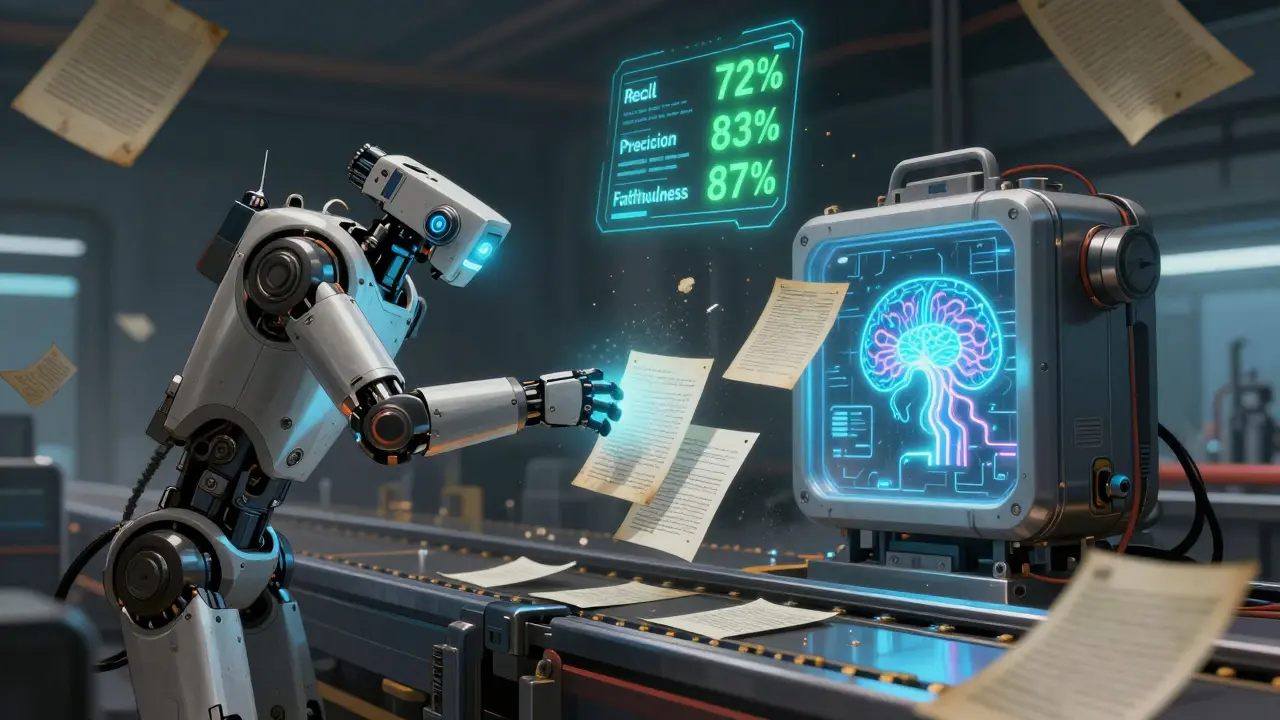

One team at a financial services firm tested 14 variations of their RAG pipeline. The best version used 600-character semantic chunks, reranked with a cross-encoder, and a prompt that forced attribution. It hit 89% recall, 83% precision, and 87% faithfulness - and cut support tickets by 40%.

Final Thought: RAG Is a System, Not a Feature

You can’t optimize recall, precision, and faithfulness in isolation. They’re connected. Improve retrieval, and faithfulness often improves. Improve prompting, and precision rises. But if you ignore one, the whole system breaks.

The goal isn’t to max out every metric. It’s to find the balance that matches your use case. For a customer chatbot? Prioritize speed and faithfulness. For medical research? Prioritize recall and correctness. For legal compliance? Prioritize groundedness.

And always test with real users. No metric replaces the feeling of trust. If your users don’t believe the answer - even if it’s technically perfect - your RAG pipeline failed.

What’s the difference between recall and precision in RAG?

Recall measures how many of the relevant documents the retriever found. High recall means few missed sources. Precision measures how many of the retrieved documents were actually useful. High precision means little noise. A system can have high recall but low precision - pulling too many irrelevant docs - or high precision but low recall - missing key sources. Both matter.

Can a RAG system be faithful but still wrong?

Yes. Faithfulness means the answer matches the retrieved context - not that the context is correct. If the source material is outdated or false, the model can faithfully repeat it and still give a wrong answer. That’s why you need to audit your knowledge base too.

Is there a single metric that tells me if my RAG is working?

No. You need at least three: recall for retrieval, faithfulness for grounding, and user satisfaction for real-world performance. Metrics like BLEU or ROUGE are useless here - they measure language similarity, not factual accuracy.

How do I know if my retriever is the bottleneck?

Run a test: feed the generator the correct documents manually, without the retriever. If the answer improves dramatically, your retriever is the problem. If it stays the same, your generator or prompt needs work.

Do I need to fine-tune the retriever?

If your domain is specialized - like law, medicine, or finance - yes. Generic retrievers treat "stroke" the same whether it’s a medical event or a painting technique. Fine-tuning with domain-specific data (using contrastive loss) can improve precision by 20% or more.

What’s the fastest way to improve RAG performance?

Start with prompt scaffolding. Tell the model: “Answer only using the provided context. Cite the source.” Then test 600-character semantic chunks. Most teams see immediate gains in faithfulness and speed with just these two changes.

Amy P

Wow. This is the most thorough breakdown of RAG I’ve seen in months. I’ve been working with enterprise RAG systems for two years, and honestly? Most people treat recall and precision like they’re interchangeable. They’re not. One’s about coverage, the other’s about purity. And faithfulness? That’s the silent killer. I’ve seen teams spend six months optimizing retrieval, only to watch their LLM turn a 70% recall system into a hallucination factory because they used a generic prompt. No wonder users lose trust.

I run a legal RAG for contract review. We prioritized faithfulness over recall-no matter how many relevant clauses we missed, we refused to let the model invent terms. We used prompt scaffolding: ‘Answer only from the provided excerpts. If uncertain, say so.’ Faithfulness jumped from 58% to 89%. Users stopped asking ‘Is this right?’ and started trusting the output. Metrics don’t lie-but only if you measure the right things.

Ashley Kuehnel

Thank you for this! I’ve been trying to explain this to my team for weeks and kept getting ‘But the answer sounded right!’

Just wanted to add-our team switched from 400-char chunks to 600-char semantic chunks (using spaCy for topic breaks) and our recall went up 19% overnight. No fine-tuning, no new models. Just better chunking. Also, if you’re using BM25, try hybrid retrieval. Dense vectors + keyword matching gives you the best of both worlds. We saw precision jump 12% without sacrificing recall. Small change, huge impact.

Colby Havard

One must recognize, with a certain degree of intellectual rigor, that the very notion of ‘faithfulness’ in LLM-generated outputs is a fundamentally flawed metric-because it presupposes an ontological equivalence between textual source and semantic truth. The retrieved documents are not ‘facts’; they are linguistic artifacts, often contaminated by institutional bias, temporal obsolescence, or editorial negligence. To measure faithfulness is to measure conformity to error.

Thus, one cannot evaluate RAG systems in isolation. One must interrogate the provenance of the knowledge base. Is it curated? Is it audited? Or is it merely a dump of Wikipedia, corporate wikis, and scraped PDFs from 2017? If the foundation is rotten, no amount of prompt scaffolding will salvage the edifice. The real bottleneck is not the retriever-it is the epistemological laziness of the organizations deploying these systems.

Moreover, the suggestion to ‘use LLMs as judges’ of faithfulness is not merely circular-it is grotesquely ironic. You are using the very system you are trying to evaluate to evaluate itself. This is not science. This is recursion masquerading as methodology.

Tyler Springall

Oh, so now we’re measuring ‘faithfulness’? How quaint. Let me guess-next you’ll be quantifying ‘trustworthiness’ using Likert scales and calling it ‘user satisfaction.’ How very 2023. The entire field of RAG evaluation is a performative ritual for engineers who don’t want to admit their models are glorified autocomplete engines with a side of hallucination.

I’ve seen systems with 90% recall, 88% precision, and 85% faithfulness that still got fired because the CFO asked, ‘Why does it say we’re expanding to Mars?’ and the answer came from a 2021 SpaceX blog post. The system was ‘correct’-according to its metrics. But it didn’t understand context. It didn’t understand power. It didn’t understand that the CEO had already canceled the Mars division.

You can’t evaluate RAG with metrics. You evaluate it with politics. With hierarchy. With who gets to say what’s ‘true.’ Metrics are the new PowerPoint slides. Pretty. Meaningless. And deeply, deeply dangerous.

adam smith

Good stuff. But honestly, just use 600 chunks and tell the model to cite sources. That’s 90% of the fix. No need to overcomplicate.

Mongezi Mkhwanazi

Let me be blunt: the entire RAG evaluation paradigm is a symptom of our collective failure to acknowledge that language models are not reasoning engines-they are statistical mimics trained on garbage data, deployed by corporations who refuse to audit their sources because auditing costs money and interrupts quarterly growth projections. You speak of ‘recall’ and ‘precision’ as if they are objective truths, when in reality, they are metrics engineered to make executives feel safe while their systems lie with mathematical precision.

And let’s not forget: the ‘context overlap’ method? It’s a joke. If the model paraphrases a sentence, it’s flagged as ‘not faithful.’ But if it copies word-for-word, even if the source is misinformation, it’s ‘faithful.’ This isn’t evaluation-it’s a Turing test for compliance officers.

Real-world systems don’t fail because of chunk size. They fail because the knowledge base is a graveyard of outdated policies, deleted Wikipedia pages, and internal memos written by interns who didn’t know what they were doing. No algorithm can fix that. Only accountability can.

And yet-you’ll still see teams spend six months tuning rerankers while ignoring that their entire corpus was scraped from a defunct government site that hasn’t been updated since 2015. That’s not engineering. That’s denial.

Also: the idea that ‘user satisfaction’ is a valid metric? Please. Users don’t know what they want. They just want answers that feel right. And if the system says ‘aspirin prevents heart attacks,’ they’ll believe it-because it sounds authoritative. That’s not success. That’s a liability waiting to happen.

Mark Nitka

Love this breakdown. But I want to push back gently on one thing: the idea that ‘faithfulness’ should always be prioritized. In customer support, I’ve seen cases where a user asks, ‘What’s my refund policy?’ and the retrieved doc says ‘Refunds processed in 7–10 days.’ But the company changed it to 3 days last month. The model stuck to the old doc-faithful, but wrong.

That’s when you need to let the model say: ‘I found conflicting info. The latest policy says 3 days. The doc says 7–10. Which would you like me to follow?’

Maybe we need a fourth metric: ‘Adaptability.’ Not just faithful to the source-but aware of when the source is outdated. That’s the next frontier.

Kelley Nelson

While the empirical framework presented herein is methodologically sound, one must interrogate the underlying assumption that these metrics-recall, precision, faithfulness-are sufficient for operational deployment. The very lexicon employed suggests a positivist epistemology that is increasingly untenable in the post-truth era. Truth, in this context, is not an objective state but a negotiated artifact.

Furthermore, the suggestion to employ LLMs as judges of faithfulness constitutes a form of epistemic recursion that undermines the credibility of the entire evaluation paradigm. If the judge is the same system being judged, then the validation process is not only circular but self-reinforcing.

One might reasonably argue that the true metric of success is not quantifiable at all. It is the absence of user inquiry. The silence after an answer. The lack of follow-up. That is the true measure of trust. And it cannot be captured in a spreadsheet.

Aryan Gupta

Okay, but what if the knowledge base was compromised? What if someone injected false documents into your RAG system? You think recall and precision will save you? No. They’ll just make the lie look more credible. I’ve seen this happen. A competitor uploaded fake medical guidelines into a hospital’s RAG. The system didn’t hallucinate-it just repeated the poison. Faithfulness? 92%. Correctness? 0%. Users died.

This isn’t about metrics. It’s about security. You need blockchain-backed provenance. You need cryptographic hashes on every document. You need audit trails that can’t be erased. And you need to assume your data is always being tampered with. Because it is.

And yet, no one talks about this. Everyone’s obsessed with chunk sizes and rerankers. Meanwhile, the hackers are already in. You’re optimizing a house while the foundation is on fire.

Also-why are we using LLMs to judge LLMs? That’s like using a broken thermometer to check if your fever is real. The entire field is built on a lie. And you’re all just polishing the lie while people get hurt.

Someone’s going to die because of this. And when they do, you’ll be the ones writing papers about ‘faithfulness’ instead of asking who had access to the knowledge base.