Choosing the right large language model (LLM) isn’t about picking the most powerful one anymore. It’s about finding the one that delivers the most value for your money. In 2026, the gap between high-end models and budget-friendly alternatives has widened dramatically-and so has the opportunity. If you’re still using GPT-4 Turbo for every task, you’re probably overspending. The real game now is matching the right model to the right job. And that’s where the cost-quality frontier comes in.

What Is the Cost-Quality Frontier?

The cost-quality frontier is the sweet spot where you get the most performance for the least cost. Think of it like buying a car. You don’t need a Formula 1 racecar to commute to work. But you also don’t want a clunker that breaks down every week. In AI, this means skipping the $20-per-million-tokens models for simple tasks and switching to models that cost less than $1 per million tokens-without killing your output quality.

Here’s the hard truth: GPT-4-level performance used to cost $60 per million tokens in 2023. Today? It’s under $0.75. That’s a 98% drop. But not all models dropped at the same rate. Some got 10x cheaper. Others only 2x. The winners? Models built with smart architecture, not just bigger training sets.

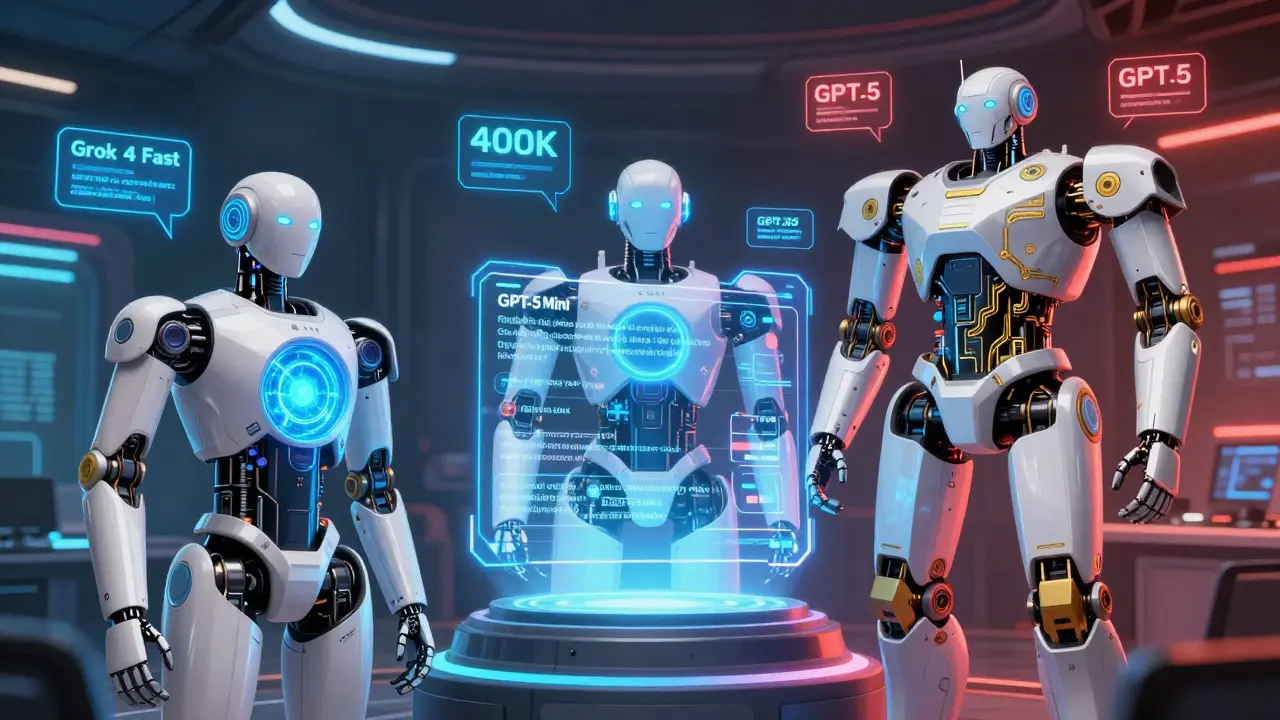

The New Value-Tier LLMs (2026 Edition)

By early 2026, five models have taken over the value tier. These aren’t watered-down versions-they’re purpose-built for efficiency. Here’s what they offer:

- Grok 4 Fast ($0.05 input / $0.50 output per million tokens): The cheapest on the market. Built for speed, not depth. Ideal for chatbots, FAQs, and short-form content.

- GPT-5 Mini ($0.25 input / $2.00 output): A balanced pick. Handles long context (400k tokens) and has a special $0.025 rate for repeated prompts. Perfect for document summarization and template-driven workflows.

- Claude 3.5 Haiku ($1.50 input / $7.50 output): Slightly pricier, but better at reasoning than Grok. Good for customer support that needs a little more nuance.

- Gemini Flash ($0.35 input / $1.70 output): Best for image-heavy tasks. Processes 1 million tokens of context and handles visuals better than rivals.

- DeepSeek-V3 ($0.14 input / $0.70 output): Strong multilingual performance. Popular in global customer service setups.

These models don’t try to be GPT-5. They’re designed to do 90% of the job at 10% of the cost. And that’s the point.

How Much Can You Really Save?

Let’s say your company processes 50 million tokens a month. If you used GPT-4 Turbo (pre-2025 pricing), you’d pay around $50,000. Now? Here’s what happens with smart choices:

- 70% on Grok 4 Fast (35M tokens): $350

- 25% on GPT-5 Mini (12.5M tokens): $1,875

- 5% on GPT-5 (2.5M tokens): $5,000

Total cost: $7,225. That’s an 85.5% reduction in spending. And you didn’t sacrifice quality-you just stopped overpaying for tasks that don’t need it.

One company on Reddit switched their entire customer service bot from GPT-4 to Grok 4 Fast. Monthly cost dropped from $22,000 to $1,850. User satisfaction stayed at 93%. That’s not magic. That’s smart allocation.

When Not to Use the Cheap Models

Not all tasks are created equal. You can’t run medical diagnoses, legal contract reviews, or financial risk modeling on Grok 4 Fast and expect reliable results. Here’s why:

- Reasoning depth: Grok 4 Fast scores 18% lower than GPT-5 on chain-of-thought tasks (MIT CSAIL, Dec 2025). It’s great at answering “What’s the weather?” but struggles with “Explain why this clause violates SEC rules.”

- Hallucination rate: Value-tier models hallucinate 2-3 times more often on niche topics (Stanford CRFM, Jan 2026). If your users rely on accurate citations, stick with premium models.

- Complex context: GPT-5 Mini handles 400k tokens. Grok 4 Fast maxes out at 512k, but its attention system isn’t built for deep analysis. It’s fast, but shallow.

One health tech team tried using GPT-5 Mini for rare disease diagnosis. Error rate jumped to 32%. They went back to GPT-5-even though it cost 8x more. Sometimes, the cost of a mistake is higher than the cost of the model.

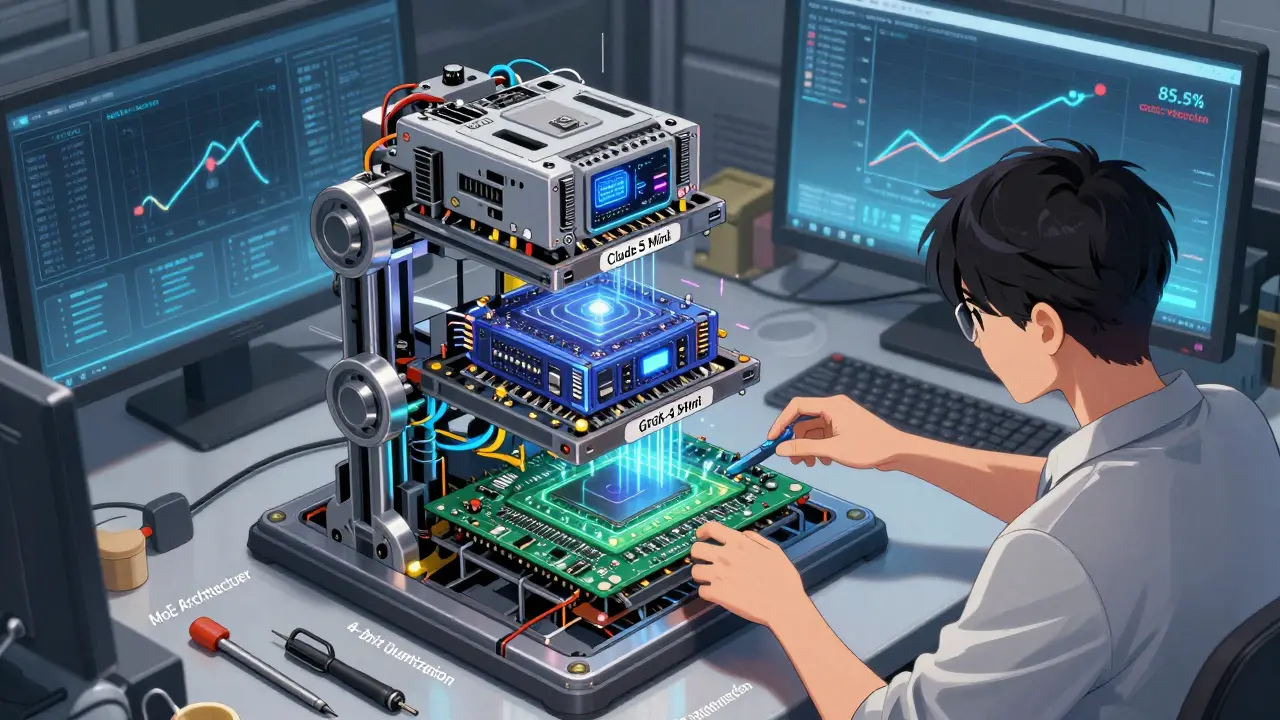

Architecture Matters More Than Brand

Why are these new models so cheap? It’s not just lower compute costs. It’s smarter design.

- Mixture-of-Experts (MoE): Models like Grok 4 Fast and GPT-5 Mini only activate 12-25% of their parameters per request. That cuts power use by 60-75%. No need to run the whole engine for a simple reply.

- 4-bit quantization: They compress model weights to use 75% less memory. Think of it like streaming HD video instead of 4K-you still see the detail, but it loads faster and costs less.

- Sparse attention: Reduces the quadratic complexity that makes long-context models expensive. Gemini Flash handles 1 million tokens because of this.

These aren’t gimmicks. They’re engineering breakthroughs that let you get 92-95% of the performance of flagship models at a fraction of the cost. The trade-off isn’t quality-it’s depth.

How to Build Your LLM Stack

You don’t need one model. You need a stack. Here’s how to set it up:

- Classify your tasks: Group them by complexity. Low: chatbots, social media posts. Medium: summaries, email drafts, basic analytics. High: legal, medical, financial.

- Assign models: Use Grok 4 Fast for low. GPT-5 Mini for medium. Reserve GPT-5 or Claude 3.5 Opus for high-stakes tasks.

- Use cached input pricing: If you reuse prompts (like templates), GPT-5 Mini’s $0.025 per million tokens for repeated inputs saves even more.

- Monitor output quality: Set up automated checks for hallucinations, accuracy, and user satisfaction. Don’t assume cheaper = acceptable.

- Re-evaluate every 90 days: New models drop every quarter. What’s cheap today might be obsolete tomorrow.

Most teams start with one model. The smart ones start with three-and build a routing system that sends each request to the right engine.

What’s Next? The End of One-Size-Fits-All

By 2027, Gartner predicts 75% of routine enterprise AI tasks will run on lightweight models. The market is splitting into two: commodity models for high-volume tasks, and premium models for deep reasoning.

And it’s not slowing down. OpenAI just added cached pricing for GPT-5 Mini. xAI launched Grok 4 Ultra to fill the mid-tier gap. DeepSeek and Qwen are pushing multimodal efficiency below $0.05 per million tokens.

The future isn’t about picking the best LLM. It’s about picking the right LLM for each job. And that’s where the real ROI lives.

Common Mistakes to Avoid

- Using premium models for simple tasks: If you’re using GPT-5 to generate product descriptions, you’re throwing away money.

- Ignoring context length: A model that handles 128k tokens won’t help if your documents are 300k. Always match context size to your data.

- Not testing hallucinations: Cheap models make up facts. Run sample outputs through a fact-checking tool before deployment.

- Overlooking documentation: Grok 4 Fast’s docs are thin. GPT-5 Mini’s are excellent. Better docs = faster integration.

- Forgetting regulatory risk: The EU AI Act requires cost transparency for deployments over €50,000/year. Track every dollar.

What’s the cheapest LLM available in 2026?

Grok 4 Fast by xAI is the cheapest at $0.05 per million input tokens and $0.50 per million output tokens. It’s ideal for high-volume, short-response tasks like customer service chatbots and basic content generation. For comparison, GPT-4 Turbo cost over $5,500 per million tokens in 2024-Grok 4 Fast is 12x cheaper than that.

Can I use a low-cost LLM for medical or legal work?

Not reliably. Value-tier models like Grok 4 Fast and GPT-5 Mini have 2-3x higher hallucination rates on specialized domains. In medical diagnosis or legal analysis, even a 5% error rate can lead to serious consequences. Stick with premium models like GPT-5 or Claude 3.5 Opus for these tasks, even if they cost 8-10x more. The risk isn’t worth the savings.

How do I know if switching to a cheaper model will hurt my user experience?

Run A/B tests. Send the same user queries to both your current model and the cheaper alternative. Measure response accuracy, user satisfaction scores, and task completion rates. For example, one company tested Grok 4 Fast vs GPT-4 in customer service and found 93% satisfaction with both-so they switched. If your users notice a drop in quality, you’re not ready to switch.

What’s the best LLM for summarizing long documents?

GPT-5 Mini is the top choice. With a 400k-token context window and cached input pricing at $0.025 per million tokens for repeated prompts, it’s designed for this exact use case. Gemini Flash also handles up to 1 million tokens, but its strength is multimodal input. For pure text summarization, GPT-5 Mini offers the best balance of length, cost, and reliability.

Will LLM prices keep dropping?

Yes, but slower. Between 2020 and 2025, prices fell 50x. Since early 2024, they’ve halved every 3.7 months. By late 2026, experts predict GPT-4-level performance could cost as little as $0.10 per million tokens. However, the biggest drops are in general-purpose tasks. Premium models for deep reasoning are stabilizing-because their value is in accuracy, not speed.

Next Steps: Start Small, Think in Layers

Don’t overhaul your entire AI stack overnight. Pick one high-volume, low-risk task-like answering FAQs or drafting marketing copy-and test Grok 4 Fast or GPT-5 Mini on it. Track cost, speed, and user feedback. If it works, expand. If not, you’ve lost little. The goal isn’t to be the cheapest. It’s to be the smartest.

Victoria Kingsbury

Love this breakdown. Seriously, who’s still running GPT-4 Turbo for FAQ bots? I switched our support bot to Grok 4 Fast last quarter and our cloud bill dropped like a rock. Like, 90% cheaper. And users didn’t even notice. They just got faster replies. The real win? We stopped over-engineering every single interaction. Not every question needs a PhD-level answer. Sometimes ‘It’s 72°F and sunny’ is enough.

Also, caching repeated prompts with GPT-5 Mini? Genius. We had a template for onboarding emails that was eating $1200/month. Now it’s $18. That’s not optimization-that’s free money.

PS: The 4-bit quantization bit? Mind blown. It’s like upgrading from dial-up to fiber without changing your router. The tech’s just smarter now.

Tonya Trottman

Oh please. You guys are acting like this is some revolutionary insight. ‘Use the right tool for the job’? Wow. Groundbreaking. I’m sure the first guy who used a hammer instead of a rock to drive a nail got a TED Talk too.

Also, ‘Grok 4 Fast’? Really? xAI’s naming team must’ve been on a caffeine bender. And don’t get me started on ‘GPT-5 Mini’-that’s not a model, that’s a marketing lie. Mini? It’s got 400k context. That’s not mini, that’s a goddamn mansion. They’re just repackaging old tech with new labels and calling it innovation. Classic Silicon Valley.

Rocky Wyatt

I’m not mad… I’m just disappointed. We spent six months migrating to GPT-4 Turbo because ‘it’s the future.’ Now I’m reading this and realizing we threw away $180k last year on bots that could’ve been running on $150 worth of compute. That’s not a cost mistake. That’s a leadership failure.

And don’t even get me started on the ‘hallucination rates.’ We had a client almost sue us because a cheap model claimed their contract had a clause that didn’t exist. Turned out it was a hallucination. We had to pay them $50k in goodwill. So yeah, I get it. Save money. But not at the cost of your reputation. Some things? You just pay the premium. No debate.

Santhosh Santhosh

This is actually one of the most thoughtful pieces I’ve read on LLM economics in a long time. I work in a small startup in Bangalore where every rupee counts, and I’ve been experimenting with this exact stack. We use DeepSeek-V3 for multilingual customer queries, GPT-5 Mini for internal documentation summaries, and only GPT-5 for compliance reviews. The cost difference is staggering-we went from spending 37% of our monthly revenue on AI compute to just 4%.

But what’s not talked about enough is the human side. When you switch models, you have to retrain your team. Not on how to use AI, but on how to interpret its outputs. A junior analyst once flagged a Grok 4 Fast response as ‘wrong’ because it didn’t cite sources. But it wasn’t wrong-it just didn’t have the depth to generate citations. We had to teach them: ‘Don’t expect a PhD. Expect a very fast intern who’s good at patterns.’ That cultural shift took longer than the technical migration.

Also, I’d add one thing: monitor latency, not just cost. Sometimes a $0.10 model takes 3 seconds to respond. A $0.50 model takes 0.4. In customer-facing apps, speed is part of the quality. You can’t optimize cost without optimizing UX.

And yes, I’ve seen the EU AI Act docs. We’re already logging every token, every cost, every model used. Transparency isn’t optional anymore. It’s survival.

Veera Mavalwala

Y’all are still thinking in black and white. This isn’t ‘cheap vs expensive.’ It’s ‘dumb vs smart.’

Let me tell you what I saw last week. A Fortune 500 company in Mumbai was using Claude 3.5 Opus to draft social media captions. Can you believe that? $15 per post for a tweet? I almost fell out of my chair. We helped them switch to Grok 4 Fast. Same tone. Same emoji usage. Same engagement. Cost? $0.03. They’re now posting 10x more content. Their engagement went up. Their budget went down. Their marketing director cried happy tears.

But here’s the kicker-their legal team? Still running GPT-5. Why? Because they’re not stupid. They know when to bring out the cavalry. It’s not about price. It’s about *context*. You don’t use a chainsaw to cut your nails. And you don’t use a scalpel to split logs.

Also, ‘GPT-5 Mini’? Please. That’s just GPT-4 with a facelift and a discount sticker. Don’t be fooled by the branding. Read the fine print. The real MVPs are the ones built on MoE and sparse attention. Those are the ones that’ll still be relevant in 2028. The rest? Just noise with a price tag.

Ray Htoo

This is gold. I’ve been trying to convince my team to do this for months, but everyone’s stuck on ‘GPT-4 is the best’ like it’s a religious belief. The A/B test example? Perfect. We ran one on our internal helpdesk-same 500 queries, one model vs the other. Grok 4 Fast scored 92% satisfaction. GPT-4? 94%. So we saved $12k a month and lost 2% of user happiness. That’s a win.

Also, the cached pricing trick with GPT-5 Mini? We just implemented it for our product documentation generator. We reuse the same 12 templates 800 times a day. Now it’s $0.02 per run. That’s less than a penny. I’m going to print this out and tape it to our CEO’s monitor.

One thing I’d add: don’t just look at cost per token. Look at engineering time. Switching models means reworking your API layer, your monitoring, your fallback logic. Factor that in. The ‘cheap’ model might cost you two weeks of dev time. Is it still worth it? Usually yes-but don’t ignore the hidden cost.

And yes, I’m already prepping for the next round. DeepSeek-V3 just dropped a multimodal version for $0.04. I’m testing it on our product image captions. Fingers crossed.