Tag: prompt logging

15Mar

Security Telemetry for LLMs: Logging Prompts, Outputs, and Tool Usage

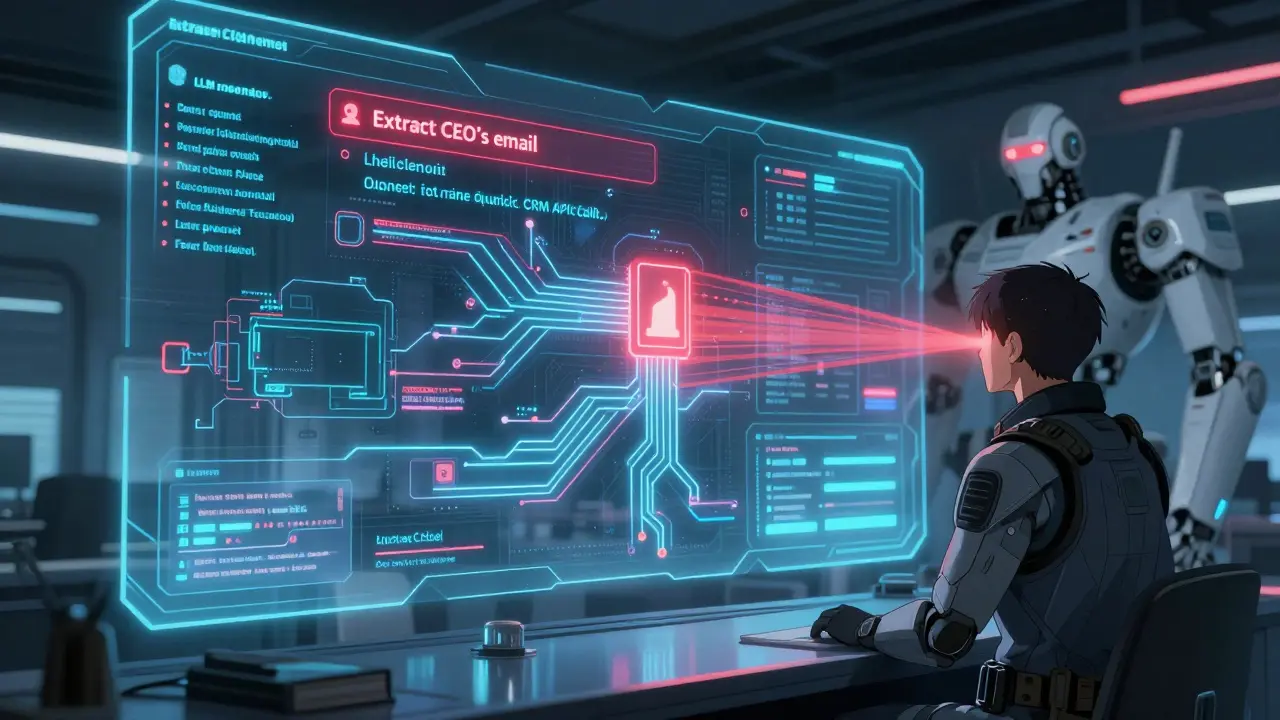

Security telemetry for LLMs tracks prompts, outputs, and tool usage to prevent data leaks, prompt injection, and unauthorized actions. Without it, companies risk exposing sensitive data and violating compliance rules.