Imagine asking an assistant to explain a complex engineering diagram while simultaneously listening to the hum of a machine in the background. A few years ago, that would require three separate tools: one for reading the image, one for transcribing the audio, and one for synthesizing the explanation. Today, Multimodal Generative AI is a type of artificial intelligence that processes and generates content across multiple data formats including text, images, audio, and video simultaneously. It doesn't just switch between tasks; it understands how these different inputs relate to each other in real-time.

This technology represents a massive leap from the single-modality systems we relied on before 2023. Back then, if you wanted AI to analyze a photo, you used a vision model. If you needed it to write code, you used a language model. They didn't talk to each other. Now, with the evolution of models like GPT-4o and Llama 4, we are seeing systems that treat text, pixels, sound waves, and sensor data as parts of a single, unified conversation. This shift is changing how we build software, diagnose medical conditions, and even create entertainment.

How Multimodal Models Actually Work

To understand why this technology feels so much more "human," you have to look under the hood. Traditional AI models were silos. A text model saw words as numbers. An image model saw pixels as matrices. They had no way to compare a word to a pixel directly.

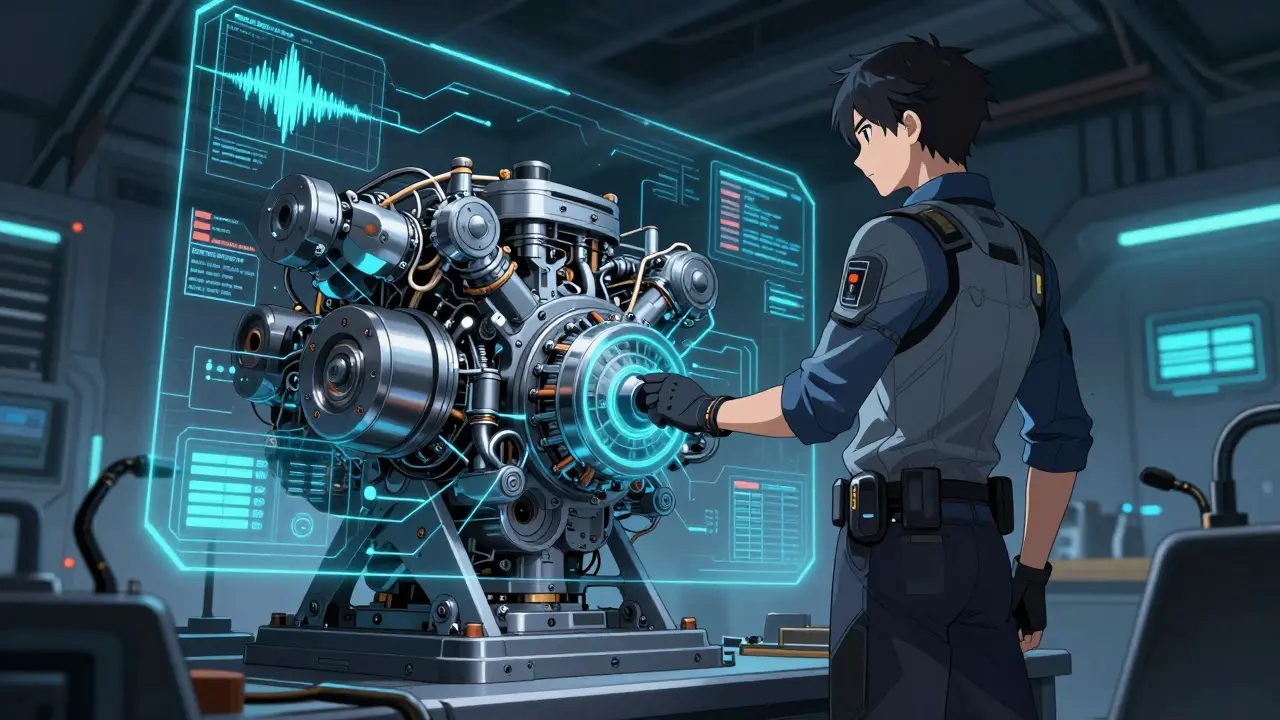

Multimodal systems solve this through a process called representation fusion. Here is the step-by-step breakdown of what happens when you upload a video of a car engine making a strange noise and ask, "What's wrong?":

- Input Processing: The system splits your input into unimodal streams. One neural network analyzes the visual frames (looking for smoke or broken parts). Another analyzes the audio waveform (listening for specific frequencies associated with mechanical failure). A third processes your text question.

- Fusion: This is the magic step. The model maps all these different data types into a shared mathematical space. It looks for relationships. Does the timing of the "clunk" sound match the visual vibration of the piston? Is the smoke visible only when the RPMs spike?

- Reasoning & Generation: Based on this combined understanding, the model generates a response. It might say, "The piston ring is failing because the audio frequency at 2kHz correlates with the visual tremor seen in frame 405."

There are three main strategies for this fusion:

- Early Fusion: Combining raw data right at the start. This allows the model to learn deep connections but requires massive computational power.

- Late Fusion: Processing each modality separately and combining the results at the end. This is faster but might miss subtle cross-modal cues.

- Hybrid Fusion: A mix of both, which is becoming the standard for high-performance models like Google Gemini 2.0.

Key Players and Model Architectures

The landscape of multimodal AI is dominated by a few key architectures and players who have pushed the boundaries of what's possible. Understanding who builds what helps you choose the right tool for your needs.

| Model | Developer | Key Strength | Latency/Speed | |

|---|---|---|---|---|

| GPT-4o | OpenAI | Real-time video processing and natural speech interaction | ~230ms latency for video | |

| Llama 4 | Meta | Open-source flexibility with strong speech and reasoning capabilities | Variable based on hardware | |

| Gemini 2.0 | Advanced audio-visual synchronization and large context windows | Optimized for cloud inference | ||

| Claude 3 | Anthropic | High reliability in enterprise workflows and reduced hallucination | Standard API speeds | |

| LLaVA | Open Source Community | Accessibility for developers building custom vision-language apps | Depends on local GPU |

OpenAI's GPT-4o set a new benchmark in late 2025 with its ability to handle 30fps video with minimal lag. Meanwhile, Meta's release of Llama 4 democratized access to high-quality speech and reasoning, allowing developers to build their own multimodal agents without paying per-token fees. For those needing strict privacy or control, open-source frameworks like LLaVA (Large Language and Vision Assistant) remain popular, boasting thousands of active contributors on GitHub.

Real-World Applications Beyond Hype

It's easy to get caught up in the novelty of AI generating art from text prompts. But the real value of multimodal AI lies in solving complex, multi-sensory problems. Here is where the technology is delivering measurable ROI in 2026.

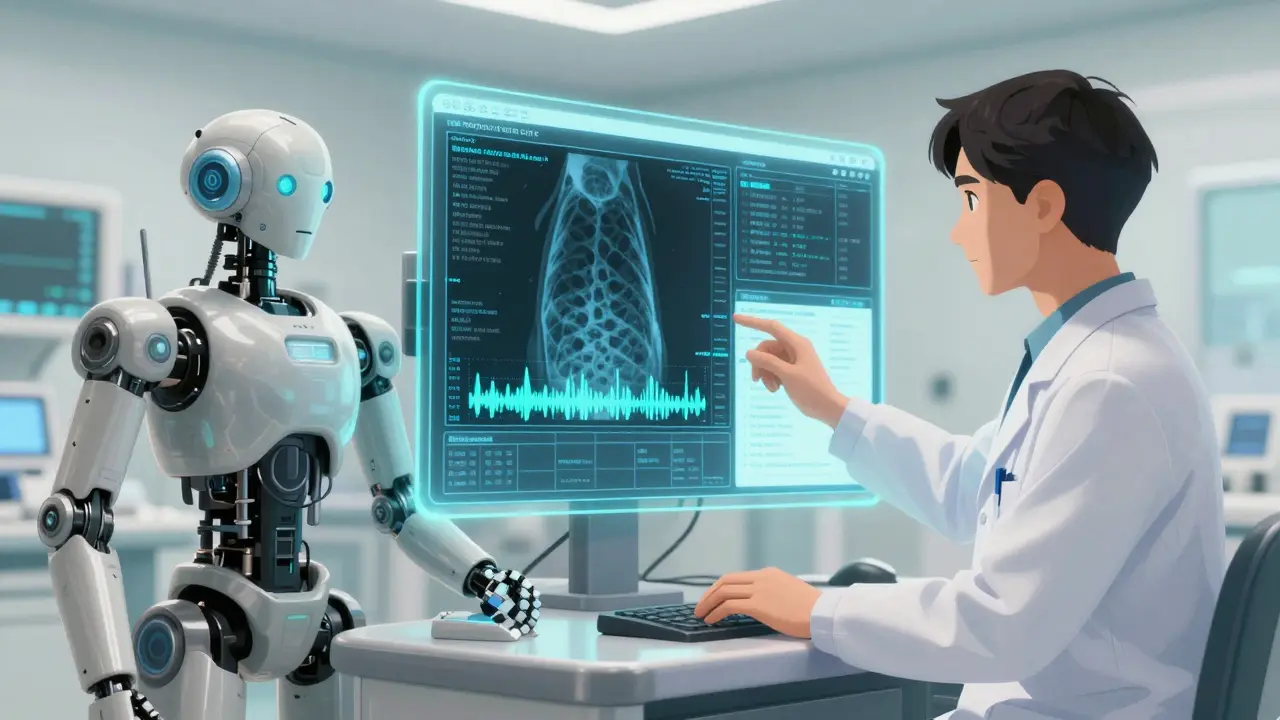

Healthcare Diagnostics: In radiology, doctors don't just look at X-rays; they read patient histories and listen to descriptions of pain. Multimodal systems now combine medical imaging with electronic health records. UnitedHealthcare reported reducing radiology report turnaround time from 48 hours to just 4.7 hours using these tools, while maintaining 98.3% diagnostic accuracy. The AI can spot a shadow on an X-ray and cross-reference it with the patient's recent cough symptoms described in their chart.

Manufacturing Quality Control: Factories are noisy places. Visual inspection cameras often miss defects that make a specific sound. By integrating visual sensors with audio stream analysis, manufacturers have reduced false positives by over 50%. If a machine looks fine but sounds "off," the multimodal system flags it for maintenance before a breakdown occurs.

Customer Service: Imagine a support bot that can see your screen, hear your frustration in your voice tone, and read your chat history. Systems like these have reduced average handling time by 34% in major enterprises. They don't just answer questions; they de-escalate situations by recognizing emotional cues in audio and visual contexts.

The Challenges You Can't Ignore

Multimodal AI is powerful, but it comes with significant costs and risks. Before implementing these systems, you need to be aware of the hurdles.

Computational Cost: Processing multiple modalities simultaneously is expensive. Codica’s technical analysis shows that inference costs for multimodal systems are roughly 3.7 times higher than single-modality text models. Training data preparation also takes longer-expect 8 to 12 weeks of specialized curation compared to just a few weeks for text-only models.

Modality Hallucination: This is a critical safety issue. Dr. Marcus Chen from Stanford warns that current systems suffer from "modality hallucination" in about 22% of complex reasoning tasks. This means the AI might confidently state that a person in a video is wearing a red shirt when they are actually wearing blue, or misinterpret a spoken command due to background noise. In high-stakes fields like medicine or autonomous driving, these inconsistencies can be dangerous.

Data Alignment Issues: Synchronizing temporal data is hard. If you are analyzing a video, the audio track must perfectly align with the visual frames. Practitioners report that 78% of them struggle with synchronizing video and audio streams during implementation, leading to confusing outputs.

Implementation Strategy for Developers

If you are looking to integrate multimodal capabilities into your applications, here is a practical roadmap based on current industry standards.

1. Choose Your Stack: Decide between proprietary APIs and open-source models. If you need speed and ease of use, start with OpenAI’s GPT-4o or Anthropic’s Claude 3 APIs. If you need cost control or data privacy, look at Meta’s Llama 4 or the LLaVA framework. Note that PyTorch is the preferred framework for 82% of practitioners working with these models.

2. Prepare Aligned Data: Don't just dump text and images together. Your training or fine-tuning data needs precise alignment. Use tools that can timestamp audio events against video frames. Ensure your datasets cover edge cases-what happens when the audio is silent? What happens when the image is blurry?

3. Implement Hybrid Fusion: For most applications, a hybrid approach works best. Use early fusion for features that are tightly coupled (like lip movements and speech) and late fusion for independent signals (like background noise and text sentiment).

4. Monitor for Consistency: Build evaluation pipelines that check for cross-modal consistency. If the AI generates an image and a caption, does the caption accurately describe the image? Automated tests should flag any discrepancies immediately.

The Future: Edge Computing and Agentic AI

We are moving toward a future where multimodal AI lives on your devices, not just in the cloud. Qualcomm’s Snapdragon X Elite chips, optimized for on-device multimodal processing, promise to bring low-latency AI to smartphones and AR glasses by mid-2026. This reduces privacy risks since your data never leaves your phone.

Furthermore, the next big trend is agentic capabilities. Instead of just answering questions, multimodal agents will autonomously complete multi-step tasks. Imagine an agent that watches your home security camera, hears a break-in, locks the smart doors, and calls the police-all without human intervention. However, this raises serious ethical and regulatory questions, especially with the EU’s AI Act imposing strict oversight on high-risk applications starting January 2026.

What is the difference between multimodal AI and traditional AI?

Traditional AI models typically process only one type of data, such as text or images, in isolation. Multimodal AI integrates multiple data types (text, image, audio, video) simultaneously, allowing it to understand relationships between them. For example, a traditional model might identify a dog in a photo, but a multimodal model can identify the dog, hear its bark, and understand a caption describing its breed.

Is multimodal AI more expensive to run than text-only AI?

Yes, significantly. Inference costs for multimodal systems are approximately 3.7 times higher than single-modality text models due to the increased computational complexity of processing and fusing different data streams. Additionally, training data preparation takes longer and requires more specialized resources.

What are the biggest risks of using multimodal generative AI?

The primary risks include "modality hallucination," where the AI incorrectly interprets or generates conflicting information across different modes (e.g., describing a visual scene inaccurately). There are also concerns about deepfake proliferation, privacy issues related to multi-sensor data collection, and high energy consumption during training.

Which companies are leading the multimodal AI market in 2026?

The market is dominated by Big Tech platforms including OpenAI (GPT-4o), Google (Gemini 2.0), and Meta (Llama 4). Anthropic (Claude 3) is also a major player, particularly in enterprise applications. Open-source communities contribute significantly through frameworks like LLaVA.

Can multimodal AI replace human experts in fields like healthcare?

Not entirely, but it acts as a powerful assistant. In healthcare, multimodal systems have improved diagnostic accuracy and speed, such as reducing radiology report times. However, due to risks like hallucination and the need for nuanced judgment, human oversight remains critical, especially in high-stakes decisions.