Imagine you’re chatting with an AI assistant. You share a personal story to get advice. Moments later, that same story appears in a training dataset for the next version of the model. Did you agree to that? Most users didn’t realize their words could be used this way. This is the core problem with consent management in Large Language Model (LLM)-powered applications. Traditional cookie banners don’t cut it anymore. We need systems that handle the unique ways AI processes, stores, and learns from your data.

The stakes are high. Regulations like the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) demand clear permission before data collection. But LLMs work differently than standard websites. They retain context, fine-tune on user inputs, and sometimes memorize sensitive details. If you’re building or using these tools, understanding how consent works is no longer optional-it’s critical for trust and legal safety.

Why Standard Consent Fails for AI

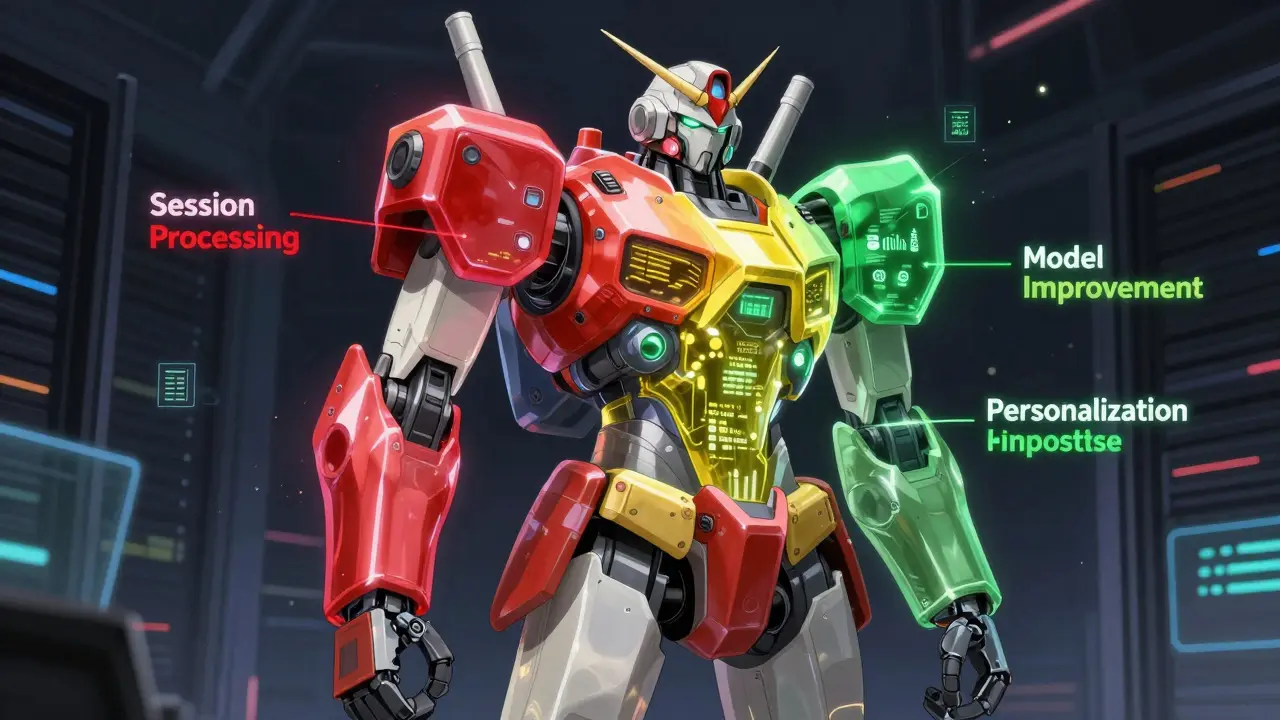

Traditional Consent Management Platforms (CMPs) were built for cookies. They ask if you want tracking pixels or marketing emails. That’s straightforward. LLMs complicate things because they use data in three distinct ways:

- Session processing: Using your input only to generate an immediate response. The data disappears after the chat ends.

- Model improvement: Anonymizing your inputs to help train the AI for future versions. This improves accuracy but raises privacy concerns.

- Personalization: Storing your preferences or history to tailor future interactions. This creates a persistent profile.

A simple “Accept All” button doesn’t let users choose between these options. According to Dr. Michelle Dennedy, CEO of The Privacy Consulting Group, traditional checkbox models are dangerously inadequate for LLM environments where data usage boundaries are inherently ambiguous. Users need granular control. They should decide if they want their words to improve the model or just help them right now.

Key User Rights in LLM Interactions

When interacting with AI, users have specific rights that go beyond standard web privacy. Here’s what matters most:

- Right to Withdrawal: Users must be able to opt out of training data usage at any time. Crucially, this means their past data should stop being used in new model updates.

- Right to Explanation: Users deserve to know exactly how their data influences the AI’s behavior. Vague notices aren’t enough.

- Right to Deletion: If a user deletes their account or chat history, the system must ensure that data isn’t lingering in cached contexts or fine-tuning datasets.

- Right to Contextual Consent: Permissions should be requested when relevant, not buried in a static policy page.

In January 2026, MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) audited 32 major AI applications. They found that 67% failed to properly enforce “no training data” preferences. Many apps ignored user choices during model fine-tuning. This gap shows why robust technical enforcement is essential.

Technical Challenges in Implementation

Building effective consent management for LLMs requires more than a banner. It needs deep integration into the AI pipeline. Developers face several hurdles:

Real-time Enforcement: The system must check consent status before every inference. If a user opts out of personalization, the app must mask their identity in real-time. Osano’s 2025 testing showed this adds 85-120 milliseconds to API response times. That’s slower than the 15-30 ms overhead for standard web apps, but necessary for compliance.

Data Retention vs. Memory: LLMs often keep context windows open for coherence. If a user revokes consent mid-conversation, does the AI forget previous parts of the chat? Or does it continue using that data until the session ends? Clear policies are needed here.

Fine-Tuning Isolation: When companies fine-tune models on user data, they must ensure opted-out users’ inputs are excluded. Privado AI’s 2025 guide highlights that automated monitoring is required to block actions violating user preferences across integrated systems.

| Feature | Traditional Web CMP | LLM-Specific Consent |

|---|---|---|

| Primary Focus | Cookies & Tracking | Training Data & Context |

| Granularity | Low (Analytics, Marketing) | High (Session, Training, Personalization) |

| Enforcement Point | Browser Level | API Inference Pipeline |

| Latency Impact | Minimal (15-30ms) | Moderate (85-120ms) |

| User Comprehension | Low (Static Banners) | Higher (Contextual Prompts) |

Regulatory Landscape in 2026

The rules are tightening fast. In March 2025, the European Data Protection Board (EDPB) stated that training LLMs on non-consented personal data likely violates GDPR Article 6. This puts pressure on developers to build explicit consent mechanisms.

In the US, the California Privacy Protection Agency released draft regulations in September 2025 requiring specific, granular consent for AI training data. Meanwhile, the EU’s upcoming AI Act, expected in Q2 2026, will mandate human-in-the-loop verification for high-risk systems. These laws force companies to move beyond vague privacy policies.

NIST also released its draft AI Risk Management Framework Consent Module in January 2026. It recommends continuous consent verification throughout the AI lifecycle. One-time permissions are becoming obsolete. Systems must constantly check if user preferences have changed.

Best Practices for Developers

If you’re building an LLM application, follow these steps to protect user rights:

- Use Granular Toggles: Offer separate switches for session use, model training, and personalization. Don’t bundle them.

- Explain in Plain Language: Use natural language prompts during conversations. Microsoft’s Azure AI Consent Framework achieved 42% higher comprehension scores by explaining data usage in context.

- Implement Real-Time Checks: Integrate consent verification at pre-inference, processing, and post-response stages. Block prohibited data elements automatically.

- Provide Easy Withdrawal: Make opting out as easy as opting in. Ensure withdrawal stops future use of past data in training cycles.

- Avoid Consent Fatigue: Stanford HCI Group found abandonment rates increase by 33% after three prompts in one session. Space requests out logically.

Enterprise adoption is growing. Financial services (42%) and healthcare (37%) lead in specialized LLM consent implementation due to stricter regulations. Retail and media lag behind at 19% and 14%. Early adopters report a 15-25% drop in response relevance when strict consent enforcement redacts personalization data. Balancing utility and privacy is key.

The Future of AI Consent

The market is shifting. Gartner projects AI-specific consent management will grow from $58 million in 2025 to $412 million by 2027. By 2028, 75% of enterprise LLM deployments will use specialized systems. We’re moving away from static banners toward conversational interfaces.

Gartner predicts that by 2027, 60% of leading AI platforms will implement conversational consent interfaces. Imagine an AI saying, “I’d like to save this tip to help me answer better next time. Is that okay?” This approach builds trust while ensuring compliance. Tools like OneTrust’s planned “Contextual Consent for Generative AI” and Osano’s “LLM Consent Verification SDK” aim to make this easier.

However, risks remain. Only 12 of 47 tested LLM applications provided meaningful withdrawal mechanisms in early 2026. As AI becomes more embedded in daily life, getting consent right isn’t just about avoiding fines. It’s about respecting the people who trust you with their words.

What is consent management in LLM applications?

It is the process of obtaining, documenting, and enforcing user permissions regarding how their data is collected, processed, and stored by Large Language Models. Unlike traditional web consent, it covers session processing, model training, and personalization.

How does GDPR affect LLM training data?

The EDPB has indicated that training LLMs on personal data without explicit consent likely violates GDPR Article 6. Companies must ensure users actively permit their data to be used for model improvement, not just for immediate responses.

Can users withdraw consent for AI training?

Yes, users have the right to withdraw consent. However, technical challenges exist. As of early 2026, many systems fail to stop using previously submitted data in new training cycles after withdrawal. Robust systems must isolate and exclude opted-out data immediately.

What is the difference between session data and training data?

Session data is used only to generate an immediate response and is typically discarded afterward. Training data is anonymized and used to update the model’s weights, improving its future performance. Users should be able to choose which applies to their inputs.

Why do LLM consent checks add latency?

Real-time consent verification requires checking user preferences against data elements before inference. This adds 85-120 milliseconds to API response times compared to standard web apps. It ensures prohibited data is masked or excluded dynamically.

What is conversational consent?

Conversational consent involves explaining data usage through natural dialogue during interactions rather than static banners. For example, an AI might ask for permission to save a specific insight. This improves user comprehension and trust.

Which industries are leading in LLM consent adoption?

Financial services (42%) and healthcare organizations (37%) lead due to stricter regulatory environments. Retail (19%) and media (14%) lag behind. Adoption is driven by both legal requirements and consumer demand for transparency.

How can developers prevent consent fatigue?

Limit the number of prompts per session. Stanford research shows abandonment increases by 33% after three requests. Use contextual timing, such as asking for training consent when the user provides valuable feedback, rather than interrupting flow randomly.